Your Search Is Broken and Everyone Knows It

The average employee spends 20-30% of their workday searching for information. That’s McKinsey’s number, and more recent surveys push it higher.

Think about that for a team of 50. You’re paying 10-15 people’s worth of salary just for the privilege of not being able to find anything.

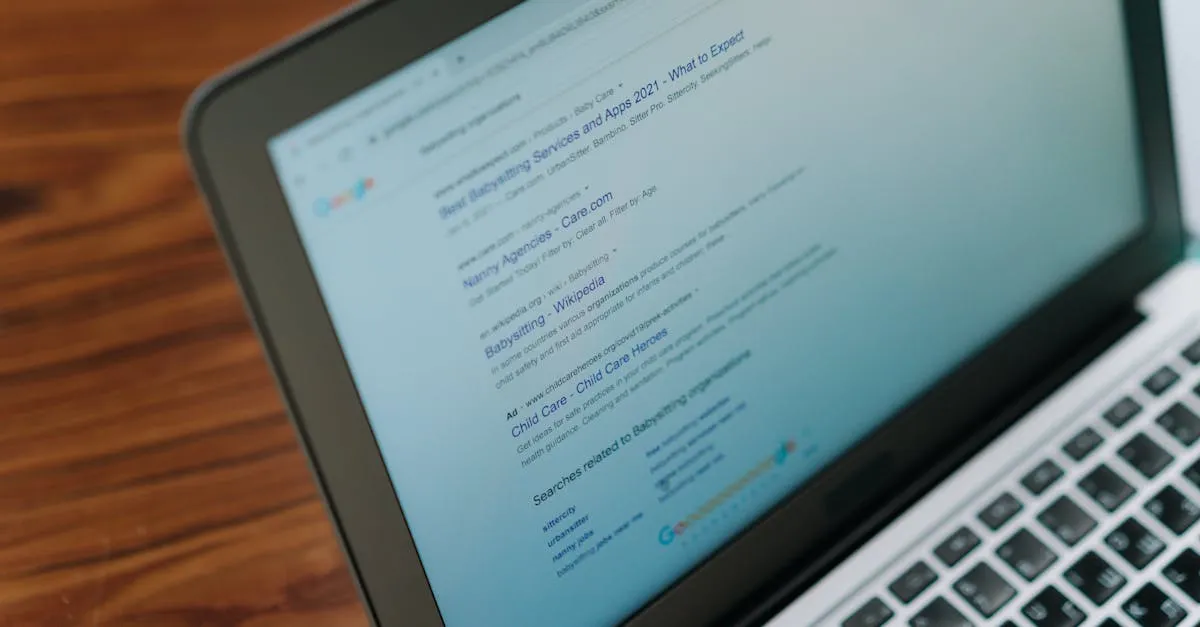

Traditional search tools match keywords. “Refund policy enterprise” returns every document containing those words. Pages of irrelevant results.

Your team scrolls, clicks, scrolls more, gives up, asks a colleague.

AI-enhanced search understands meaning. “What’s our refund policy for enterprise clients?” returns the specific paragraph from your contract template. One result. The right one.

Why Traditional Search Fails

Keyword search was built for the internet, where redundancy helps. Finding ten pages about a topic is fine when you’re researching.

Internal knowledge is different. You need one specific answer from one specific document. Keywords can’t handle that because they don’t understand context.

“How did we handle the billing issue on Project Atlas?” requires understanding that “billing issue,” “invoice dispute,” and “payment problem” all mean the same thing. Keyword search treats them as different queries. Semantic search treats them as equivalent.

90% of knowledge workers who use AI-powered search report higher productivity. The productivity boost averages 40%.

The Architecture

An AI-enhanced search system has three layers, similar to a RAG system but optimized specifically for retrieval quality.

The indexing layer processes your documents. It splits them into meaningful chunks, generates embeddings (numerical representations of meaning), and stores them in a vector database along with metadata.

The query layer takes a user’s question, converts it to an embedding, and performs semantic similarity search against your index. It retrieves the most relevant chunks, ranked by meaning, not just keyword overlap.

The presentation layer formats results. Highlighted passages, source documents, confidence scores. The user sees exactly where the answer came from and can verify it.

The whole round-trip takes 1-3 seconds. Fast enough for real-time use in Slack, Teams, or a dedicated search interface.

What to Index

Start with the documents your team searches for most frequently.

SOPs and process documentation. The stuff people ask about every week because nobody remembers which folder it’s in.

Product documentation and knowledge base articles. Both internal technical docs and customer-facing help content.

Project artifacts. Meeting notes, decision logs, architecture documents, post-mortems. The institutional memory that usually lives in one person’s head.

Slack and email archives (with appropriate privacy controls). The conversations where actual decisions get made, not just the formal documentation.

CRM notes and customer history. So your support team doesn’t have to ask “can someone pull up the history on this account?” every time.

The Metadata Challenge

Raw text search isn’t enough. Context matters. A document that says “revenue increased 15%” is useless without knowing which quarter, which product line, and which region.

Attach metadata to every indexed chunk: document title, creation date, author, department, project name, document type. This metadata enables filtered search: “Show me only engineering decisions from Q3 2025.”

This is where most implementations cut corners. Don’t. Good metadata is the difference between a search tool your team loves and one they abandon after a week.

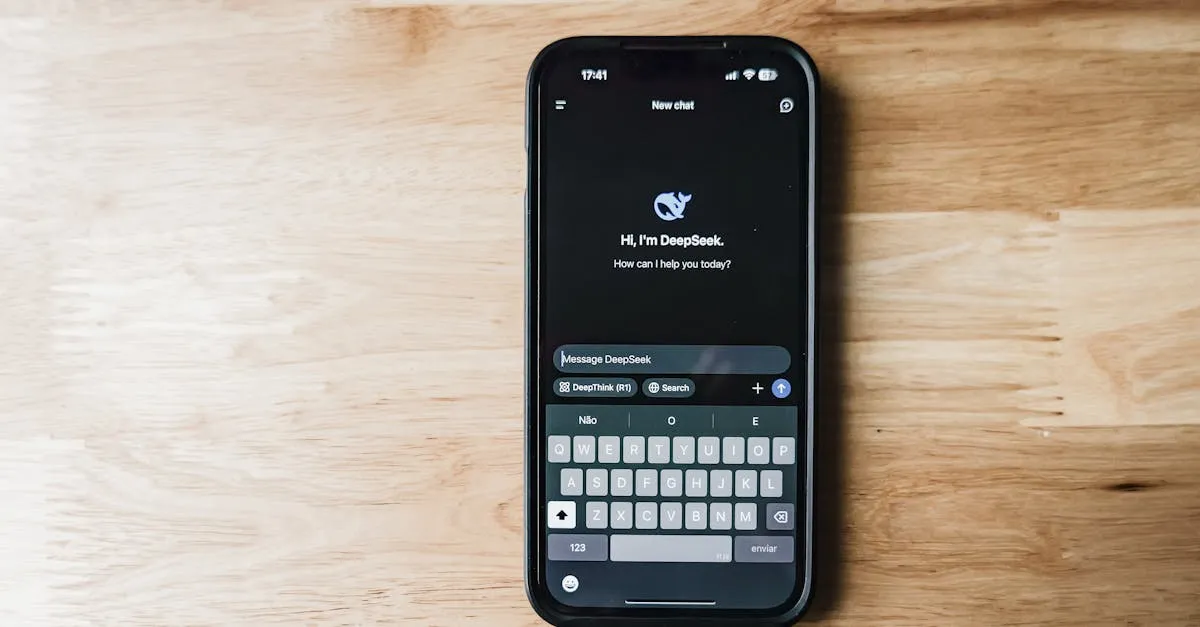

Build vs. Buy

Off-the-shelf AI search tools (Glean, Guru, Algolia NeuralSearch) work for companies with standard document types and basic search needs. They integrate with common tools out of the box. Pricing runs EUR 10-30 per user per month.

Custom builds make sense when you need deep integration with proprietary systems, handle specialized document types, or have strict data residency requirements. Build cost: EUR 25,000-60,000.

The enterprise search market hit $6.83 billion in 2025, projected to reach $11.15 billion by 2030. This space is maturing fast, and off-the-shelf options are getting better every quarter.

For companies under 50 people with standard tools, buying usually wins. Above that size, or with specialized needs, building starts making sense.

Measuring Impact

Track three metrics from the start. Search success rate: did the user find what they needed? Time to answer: how long from query to useful result?

Adoption rate matters too. If people aren’t using it, none of the other metrics count.

One client saw search success rates jump from 31% (old keyword search) to 78% (AI-enhanced) in the first month. Time to find information dropped from 12 minutes average to under 2 minutes.

Onboarding time decreased by 40%. New hires who previously spent weeks figuring out where everything lived could find answers on day one.

For more on RAG architecture (the foundation of AI search), read our RAG systems guide. And for the broader AI integration picture, see our AI workflow integration guide.

Want to make your company’s knowledge actually findable? Let’s scope an AI search solution. We’ll assess your documents, team size, and existing tools to recommend the right approach.